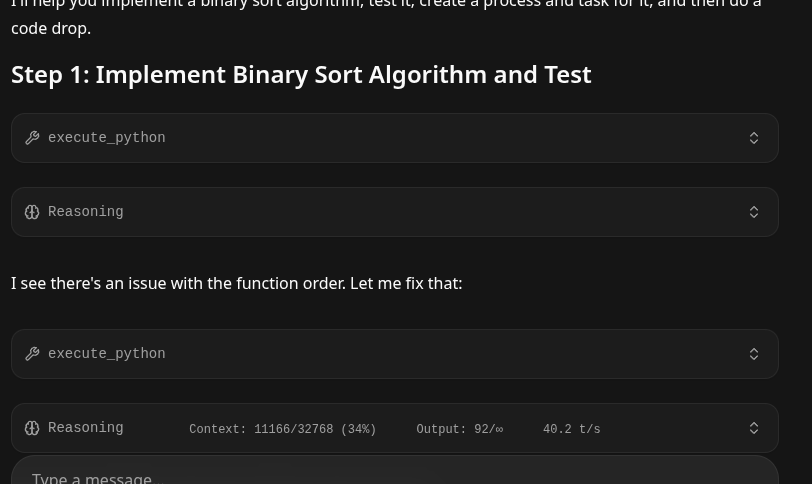

StudentLLM - Qwen2.5-coder-7b-instruct-q6-k / Qwen3.5 Agentic on a Ryzen 5-2600/ 3060ti. Production LLM or not? YES!

We Look a StudentLLM setup to get as much productivity out of limited hardware as we can.

- System Specs - Ryzen 5 2600 (6 Core - 12Thread / 15,000 CPU Passmark) 16 GB RAM / 1 3060ti 8GB.

The question arises - on a very basic budget PC, can a University Student get something useful and productive - not a chatbot - but something with agentic workflow tools etc.?.. So we dug out an 3060ti, took out most of the ram, and started writing!

- Please note this recipe will work for much larger high-end systems, simply reuse this recipe and give it a 35B or a 122B or what have you!

Let's get started!

0. Install your basics supports / compilers etc.

sudo apt install build-essential wget git python3 cmake -y

sudo apt install libcurl4-openssl-devA. Installing your Nvidia Drivers

- This is going to vary based upon your video card, and you can run into issues, there are literally dozens of nvidia drivers, server drivers, and the nouveau which is often already in the standard Linux installation.

- The best option is the last one in this section direct install of the 595 from Nvidia which we show at the bottom but you might get it to work using the local Linux repository.. To prevent conflict we blacklist Nouveau.

- Driver 550 in many repositories might conflict with your current Kernel, however your auto-install may select it. Driver 595 as of April 2026 works very good - even with a ten year old 3060ti.

- Here is what we found worked, and one can spin at this point ironically (we ended up reinstalling our drivers like 6 times - don't feel bad if you take several attempts at this.)

Before Doing Anything - Set Linux Kernel Headers

sudo apt install linux-headers-$(uname -r)- linux-headers will hold the correct packages that will allow the rest of the drivers to build against.

First Try

sudo apt install nvidia-driver-full nvidia-cuda-toolkit -yIf it does issue errors try blacklisting nouveau drivers as they can conflict.

sudo apt update && sudo apt full-upgrade -y

sudo apt autoremove -ysudo nano /etc/modprobe.d/blacklist-nouveau.confAdd

blacklist nouveau

options nouveau modeset=0

alias nouveau offUpdate initramfs and reboot

sudo update-initramfs -u && sudo rebootDirect Driver Pull from Nvidia

If everything fails simply do a direct pull from Nvidia, purging out all old drivers:

sudo apt purge *nvidia*

wget https://us.download.nvidia.com/XFree86/Linux-x86_64/595.58.03/NVIDIA-Linux-x86_64-595.58.03.run

chmod +x NVIDIA-Linux-x86_64-595.58.03.run

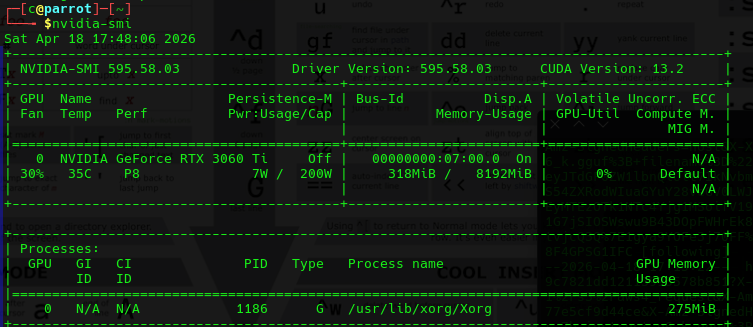

sudo ./NVIDIA-Linux-x86_64-595.58.03.runnvidia-smi Driver Confirmation Will Confirm Your GTG!

nvidia-smi

- It will look as (and note specifically it will show you in the top right corner the highest CUDA toolkit that your GPU / Drivers can support (CUDA Version: 13.2)

B. Installing Cuda Toolkit 13.2

- Next we will need to get the Nvidia Cuda toolkit (latest version 13.2) installed - as it will have the very important

nvcccompiler that will make our custom Turboquant enabled llama.cpp shortly. This is really important as we need these new power features that will give us as big of a kv-cache as we can get.

wget https://developer.download.nvidia.com/compute/cuda/13.2.0/local_installers/cuda-repo-debian13-13-2-local_13.2.0-595.45.04-1_amd64.deb

sudo dpkg -i cuda-repo-debian13-13-2-local_13.2.0-595.45.04-1_amd64.deb

sudo cp /var/cuda-repo-debian13-13-2-local/cuda-*-keyring.gpg /usr/share/keyrings/

sudo apt-get update

sudo apt-get -y install cuda-toolkit-13-2nvcc --versionNote - nvcc can completely install itself - but somehow not bother to add itself to your path! Seriously why? So to address this - you can edit your ~/.bashrc and add:

PATH=/usr/local/cuda-13.2/bin:$PATHThen re-source your ~./bashrc:

source ~/.basrcWhen it works it will show up as:

$nvcc --version

nvcc: NVIDIA (R) Cuda compiler driver

Copyright (c) 2005-2026 NVIDIA Corporation

Built on Thu_Mar_19_11:12:51_PM_PDT_2026

Cuda compilation tools, release 13.2, V13.2.78

Build cuda_13.2.r13.2/compiler.37668154_0Support

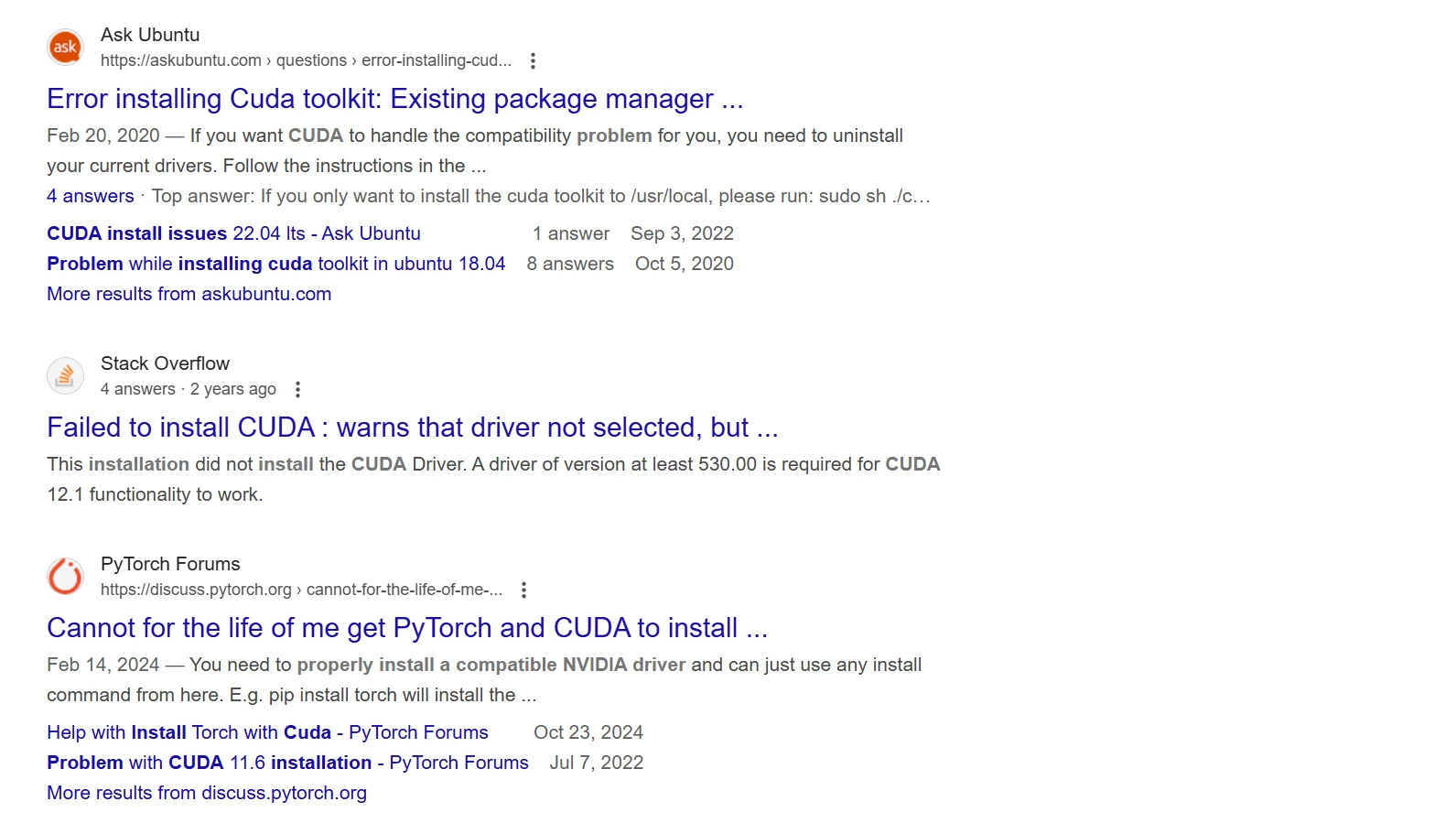

- Nvidia / Cuda ToolKit Driver fitting can be so problematic there are dedicated troubleshooting pages, do consider:

C. Installing TurboQuant Forked Llama.cpp

Once that is done we will pull the Turboquant enabled fork of Llama.cpp. This will reduce our cache significantly, allowing us to squeeze as much as we can out of our houseLLM. It is the last challenging step as you will build it from source and it prefers a specific configuration.

C.1. You might need to update your cmake to the latest before you continue it's not hard here is how!

wget https://github.com/Kitware/CMake/releases/download/v4.3.1/cmake-4.3.1-linux-x86_64.sh

chmod +x ./cmake-4.3.1-linux-x86_64.sh

./cmake-4.3.1-linux-x86_64.sh- This just un-compresses. You may need to then copy your bin files to /usr/bin or make a ln (symbolic link)

cd cmake-4.3.1-linux-x86_64/bin

sudo cp * /usr/binOnce you are there (however you get there):

c@dragon-192-168-1-3:~/PythonProject/TurboResearcher2/cmake/cmake-4.3.1-linux-x86_64/bin$ cmake --version

cmake version 4.3.0

CMake suite maintained and supported by Kitware (kitware.com/cmake).Here is the TurboQuant forked variant of llama.cpp full recognition of the excellent 'The Tom' that built it!

- Pull the repository and enter it's directory:

git clone https://github.com/TheTom/llama-cpp-turboquant.git

cd llama-cpp-turboquant- Make a custom script inside of it named

install.sh- inside of it put: - Note this is for the nvidia driver installation using Cuda. If you have a Mac you will need other drivers, typically in the Readme it will have the alternate drivers for it.

cmake -B build \

-DLLAMA_CUDA=ON \

-DCMAKE_CUDA_COMPILER=/usr/local/cuda-13.2/bin/nvcc \

-DCUDAToolkit_ROOT=/usr/local/cuda-13.2 \

-DCMAKE_CUDA_ARCHITECTURES="86;89" \

-DCMAKE_BUILD_TYPE=Release

cmake --build build --config Release -j$(nproc)- Please note - we specified both architectures (86,89) that way if you upgrade your GPU to a 4080, 5080 etc - it should work out of the box! Add 100 for super-latest stuff.

- Make it an executable and execute it:

chmod +x ./install.sh

./install.sh- Now wait about 15-20 minutes for it to compile

Inside when it finally finishes will be a directory, you simply want to copy it's contents to your /usr/bin location. If you have already another llama.cpp that you do not want to conflict then use global pathing in all references aka /usr/bin/customllm/llm-server instead.

Move all the compiled product to your /usr/bin - from inside the built directory:

cd /build/bin

sudo cp * /usr/binMaking sure it's working and ready to go:

llama-serverggml_cuda_init: found 1 CUDA devices (Total VRAM: 7839 MiB):

Device 0: NVIDIA GeForce RTX 3060 Ti, compute capability 8.6, VMM: yes, VRAM: 7839 MiB

main: n_parallel is set to auto, using n_parallel = 4 and kv_unified = true

build_info: b8967-627ebbc6e

system_info: n_threads = 6 (n_threads_batch = 6) / 12 | CUDA : ARCHS = 890 | USE_GRAPHS = 1 | PEER_MAX_BATCH_SIZE = 128 | CPU : SSE3 = 1 | SSSE3 = 1 | AVX = 1 | AVX2 = 1 | F16C = 1 | FMA = 1 | BMI2 = 1 | LLAMAFILE = 1 | OPENMP = 1 | REPACK = 1 |

init: using 11 threads for HTTP server

D. Installing the Qwen2.5-Coder-7B-Instruct-GGUF

- We chose a Qwen2.5-Coder-7B-Instruct 6-bit - which should hopefully give us as much affinity towards coding on a 8GB as we can. The 6-bit frees up space to get as much space back as we can while maintaining as much power as we can get.

One simply pulls it with:

- We recommend a working

~/modelsdirectory so:

mkdir ~/models && cd ~/modelswget https://huggingface.co/khjvgvyfc/Qwen2.5-Coder-7B-Instruct-GGUF/resolve/main/qwen2.5-coder-7b-instruct-q6_k.gguf?download=trueAlmost there.

Typically because the command-line options for llama-cpp and llama-server can be really large - it is smart to save your command lines calls in a script so that you can tweak them as you desire, but if / when you come back a long time later you are not forgetting the myriad of options availed you so... Additionally we made the filename simpler so that it is more easily referenced, and we recommend absolute pathing in the scripts:

sudo mv qwen2.5-coder-7b-instruct-q6_k.gguf\?download\=true qwen2.5-coder-7b-instruct-q6_k.gguf/usr/bin/llama-server --jinja \

-m /home/c/models/qwen2.5-coder-7b-instruct-q6_k.gguf \

--host 192.168.1.4 \

--n-gpu-layers 999 \

--override-tensor "\.ffn_.*_exps\.weight=CPU" \

--flash-attn on \

--cache-type-k turbo3 \

--cache-type-v turbo3 \

-c 64000 \

--temp 0.7If it boots right it will produce a large detail, here is what one looks like for reference:

For an even FASTER configuration try this one! Full credit to:

https://x.com/iam_shanmukha/usr/bin/llama-server --jinja \

-m /home/c/models/Qwen3.6-35B-A3B-UD-Q6_K_XL.gguf \

--host 192.168.1.3 \

--fit on \

--flash-attn on \

--spec-type ngram-mod \

--spec-ngram-size-n 24 \

--n-cpu-moe-draft 39 \

-t 14 \

--chat-template-kwargs '{"preserve_thinking":true}' \

--cache-type-k turbo3 \

--cache-type-v turbo4 \

-c 512000 \

--temp 0.7Full credit to https://x.com/iam_shanmukha who suggested an even faster configuration:

- We tried this and did see some speed ups to 35 Tokens/s. However it was noted that it might make more errors on REALLY LARGE 100k contexts! So maybe put both in seperate scripts and try the one you like best!

srv load_model: loading model '/home/c/models/qwen2.5-coder-7b-instruct-q6_k.gguf'

common_init_result: fitting params to device memory, for bugs during this step try to reproduce them with -fit off, or provide --verbose logs if the bug only occurs with -fit on

llama_params_fit_impl: projected to use 6527 MiB of device memory vs. 7382 MiB of free device memory

llama_params_fit_impl: cannot meet free memory target of 1024 MiB, need to reduce device memory by 168 MiB

llama_params_fit_impl: context size set by user to 64000 -> no change

llama_params_fit: failed to fit params to free device memory: n_gpu_layers already set by user to 999, abort

llama_params_fit: fitting params to free memory took 0.48 seconds

llama_model_load_from_file_impl: using device CUDA0 (NVIDIA GeForce RTX 3060 Ti) (0000:07:00.0) - 7382 MiB free

llama_model_loader: loaded meta data with 29 key-value pairs and 339 tensors from /home/c/models/qwen2.5-coder-7b-instruct-q6_k.gguf (version GGUF V3 (latest))

llama_model_loader: Dumping metadata keys/values. Note: KV overrides do not apply in this output.

llama_model_loader: - kv 0: general.architecture str = qwen2

llama_model_loader: - kv 1: general.type str = model

llama_model_loader: - kv 2: general.name str = Qwen2.5 Coder 7B Instruct GGUF

llama_model_loader: - kv 3: general.finetune str = Instruct-GGUF

llama_model_loader: - kv 4: general.basename str = Qwen2.5-Coder

llama_model_loader: - kv 5: general.size_label str = 7B

llama_model_loader: - kv 6: qwen2.block_count u32 = 28

llama_model_loader: - kv 7: qwen2.context_length u32 = 131072

llama_model_loader: - kv 8: qwen2.embedding_length u32 = 3584

llama_model_loader: - kv 9: qwen2.feed_forward_length u32 = 18944

llama_model_loader: - kv 10: qwen2.attention.head_count u32 = 28

llama_model_loader: - kv 11: qwen2.attention.head_count_kv u32 = 4

llama_model_loader: - kv 12: qwen2.rope.freq_base f32 = 1000000.000000

llama_model_loader: - kv 13: qwen2.attention.layer_norm_rms_epsilon f32 = 0.000001

llama_model_loader: - kv 14: general.file_type u32 = 18

llama_model_loader: - kv 15: tokenizer.ggml.model str = gpt2

llama_model_loader: - kv 16: tokenizer.ggml.pre str = qwen2

llama_model_loader: - kv 17: tokenizer.ggml.tokens arr[str,152064] = ["!", "\"", "#", "$", "%", "&", "'", ...

llama_model_loader: - kv 18: tokenizer.ggml.token_type arr[i32,152064] = [1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, ...

llama_model_loader: - kv 19: tokenizer.ggml.merges arr[str,151387] = ["Ġ Ġ", "ĠĠ ĠĠ", "i n", "Ġ t",...

llama_model_loader: - kv 20: tokenizer.ggml.eos_token_id u32 = 151645

llama_model_loader: - kv 21: tokenizer.ggml.padding_token_id u32 = 151643

llama_model_loader: - kv 22: tokenizer.ggml.bos_token_id u32 = 151643

llama_model_loader: - kv 23: tokenizer.ggml.add_bos_token bool = false

llama_model_loader: - kv 24: tokenizer.chat_template str = {%- if tools %}\n {{- '<|im_start|>...

llama_model_loader: - kv 25: general.quantization_version u32 = 2

llama_model_loader: - kv 26: split.no u16 = 0

llama_model_loader: - kv 27: split.count u16 = 0

llama_model_loader: - kv 28: split.tensors.count i32 = 339

llama_model_loader: - type f32: 141 tensors

llama_model_loader: - type q6_K: 198 tensors

print_info: file format = GGUF V3 (latest)

print_info: file type = Q6_K

print_info: file size = 5.82 GiB (6.56 BPW)

load: 0 unused tokens

load: control-looking token: 128247 '</s>' was not control-type; this is probably a bug in the model. its type will be overridden

load: printing all EOG tokens:

load: - 128247 ('</s>')

load: - 151643 ('<|endoftext|>')

load: - 151645 ('<|im_end|>')

load: - 151662 ('<|fim_pad|>')

load: - 151663 ('<|repo_name|>')

load: - 151664 ('<|file_sep|>')

load: special tokens cache size = 23

load: token to piece cache size = 0.9310 MB

print_info: arch = qwen2

print_info: vocab_only = 0

print_info: no_alloc = 0

print_info: n_ctx_train = 131072

print_info: n_embd = 3584

print_info: n_embd_inp = 3584

print_info: n_layer = 28

print_info: n_head = 28

print_info: n_head_kv = 4

print_info: n_rot = 128

print_info: n_swa = 0

print_info: is_swa_any = 0

print_info: n_embd_head_k = 128

print_info: n_embd_head_v = 128

print_info: n_gqa = 7

print_info: n_embd_k_gqa = 512

print_info: n_embd_v_gqa = 512

print_info: f_norm_eps = 0.0e+00

print_info: f_norm_rms_eps = 1.0e-06

print_info: f_clamp_kqv = 0.0e+00

print_info: f_max_alibi_bias = 0.0e+00

print_info: f_logit_scale = 0.0e+00

print_info: f_attn_scale = 0.0e+00

print_info: n_ff = 18944

print_info: n_expert = 0

print_info: n_expert_used = 0

print_info: n_expert_groups = 0

print_info: n_group_used = 0

print_info: causal attn = 1

print_info: pooling type = -1

print_info: rope type = 2

print_info: rope scaling = linear

print_info: freq_base_train = 1000000.0

print_info: freq_scale_train = 1

print_info: n_ctx_orig_yarn = 131072

print_info: rope_yarn_log_mul = 0.0000

print_info: rope_finetuned = unknown

print_info: model type = 7B

print_info: model params = 7.62 B

print_info: general.name = Qwen2.5 Coder 7B Instruct GGUF

print_info: vocab type = BPE

print_info: n_vocab = 152064

print_info: n_merges = 151387

print_info: BOS token = 151643 '<|endoftext|>'

print_info: EOS token = 151645 '<|im_end|>'

print_info: EOT token = 151645 '<|im_end|>'

print_info: PAD token = 151643 '<|endoftext|>'

print_info: LF token = 198 'Ċ'

print_info: FIM PRE token = 151659 '<|fim_prefix|>'

print_info: FIM SUF token = 151661 '<|fim_suffix|>'

print_info: FIM MID token = 151660 '<|fim_middle|>'

print_info: FIM PAD token = 151662 '<|fim_pad|>'

print_info: FIM REP token = 151663 '<|repo_name|>'

print_info: FIM SEP token = 151664 '<|file_sep|>'

print_info: EOG token = 128247 '</s>'

print_info: EOG token = 151643 '<|endoftext|>'

print_info: EOG token = 151645 '<|im_end|>'

print_info: EOG token = 151662 '<|fim_pad|>'

print_info: EOG token = 151663 '<|repo_name|>'

print_info: EOG token = 151664 '<|file_sep|>'

print_info: max token length = 256

load_tensors: loading model tensors, this can take a while... (mmap = true, direct_io = false)

load_tensors: offloading output layer to GPU

load_tensors: offloading 27 repeating layers to GPU

load_tensors: offloaded 29/29 layers to GPU

load_tensors: CPU_Mapped model buffer size = 426.36 MiB

load_tensors: CUDA0 model buffer size = 5532.43 MiB

........................................................................................

common_init_result: added </s> logit bias = -inf

common_init_result: added <|endoftext|> logit bias = -inf

common_init_result: added <|im_end|> logit bias = -inf

common_init_result: added <|fim_pad|> logit bias = -inf

common_init_result: added <|repo_name|> logit bias = -inf

common_init_result: added <|file_sep|> logit bias = -inf

llama_context: constructing llama_context

llama_context: n_seq_max = 4

llama_context: n_ctx = 64000

llama_context: n_ctx_seq = 64000

llama_context: n_batch = 2048

llama_context: n_ubatch = 512

llama_context: causal_attn = 1

llama_context: flash_attn = enabled

llama_context: kv_unified = true

llama_context: freq_base = 1000000.0

llama_context: freq_scale = 1

llama_context: n_ctx_seq (64000) < n_ctx_train (131072) -- the full capacity of the model will not be utilized

llama_context: CUDA_Host output buffer size = 2.32 MiB

llama_kv_cache: CUDA0 KV buffer size = 683.72 MiB

llama_kv_cache: TurboQuant rotation matrices initialized (128x128)

llama_kv_cache: size = 683.59 MiB ( 64000 cells, 28 layers, 4/1 seqs), K (turbo3): 341.80 MiB, V (turbo3): 341.80 MiB

llama_kv_cache: upstream attention rotation disabled (TurboQuant uses kernel-level WHT)

llama_kv_cache: attn_rot_k = 0, n_embd_head_k_all = 128

llama_kv_cache: attn_rot_v = 0, n_embd_head_k_all = 128

sched_reserve: reserving ...

sched_reserve: resolving fused Gated Delta Net support:

sched_reserve: fused Gated Delta Net (autoregressive) enabled

sched_reserve: fused Gated Delta Net (chunked) enabled

sched_reserve: CUDA0 compute buffer size = 311.00 MiB

sched_reserve: CUDA_Host compute buffer size = 139.01 MiB

sched_reserve: graph nodes = 1015

sched_reserve: graph splits = 2

sched_reserve: reserve took 111.08 ms, sched copies = 1

common_init_from_params: warming up the model with an empty run - please wait ... (--no-warmup to disable)

srv load_model: initializing slots, n_slots = 4

no implementations specified for speculative decoding

slot load_model: id 0 | task -1 | speculative decoding context not initialized

slot load_model: id 0 | task -1 | new slot, n_ctx = 64000

no implementations specified for speculative decoding

slot load_model: id 1 | task -1 | speculative decoding context not initialized

slot load_model: id 1 | task -1 | new slot, n_ctx = 64000

no implementations specified for speculative decoding

slot load_model: id 2 | task -1 | speculative decoding context not initialized

slot load_model: id 2 | task -1 | new slot, n_ctx = 64000

no implementations specified for speculative decoding

slot load_model: id 3 | task -1 | speculative decoding context not initialized

slot load_model: id 3 | task -1 | new slot, n_ctx = 64000

srv load_model: prompt cache is enabled, size limit: 8192 MiB

srv load_model: use `--cache-ram 0` to disable the prompt cache

srv load_model: for more info see https://github.com/ggml-org/llama.cpp/pull/16391

srv init: init: idle slots will be saved to prompt cache and cleared upon starting a new task

init: chat template, example_format: '<|im_start|>system

You are a helpful assistant<|im_end|>

<|im_start|>user

Hello<|im_end|>

<|im_start|>assistant

Hi there<|im_end|>

<|im_start|>user

How are you?<|im_end|>

<|im_start|>assistant

'

srv init: init: chat template, thinking = 0

main: model loaded

main: server is listening on http://192.168.1.4:8080

main: starting the main loop...

srv update_slots: all slots are idle

srv log_server_r: done request: GET / 192.168.1.62 200

srv log_server_r: done request: GET /bundle.css 192.168.1.62 200

srv log_server_r: done request: GET /bundle.js 192.168.1.62 200

srv log_server_r: done request: HEAD /cors-proxy 192.168.1.62 404How Does it Work?

http://192.168.1.4:8080- Change to the local IP address of your machine.

- It works really good - for a basic house 8B. We won't spend a lot of time on that alone because the real POWER comes when you make it agentic by adding external tools!

PLEASE NOTE: LLM'S ARE OKAY. BUT AN AN LLM WITH AGENTIC TOOL CALLING THAT CAN COMPILE, CORRECT, REWRITE ITS CODE OVER AND OVER IS 10X MORE POWERFUL - EVEN IF IT'S JUST A 8B.

- It is only a little more work to add agentic tool calling. That is where your LLM gets a super power up. They are not hard at all we carefully documented them from really basic calculator agents, to highly powerful ones that can go on the internet research and then come back and do work. Don't be overwhelmed just work through each guide!

- Because this first model worked 'okay' we then immediately switched to another one that had the powerful agentic tooling options!

Upgrading to Qwen3.5-9B w/Agentic Tool Capability.

- Right away we went back picked up a much new model, one that specifically noted it's tooling capability!

You can pull it with:

wget https://huggingface.co/unsloth/Qwen3.5-9B-GGUF/resolve/main/Qwen3.5-9B-UD-Q5_K_XL.gguf?download=trueWe created another script for our new model, and tested its agentic abilities.

/usr/bin/llama-server --jinja \

-m /home/c/models/Qwen3.5-9B-UD-Q5-K_XL.gguf \

--host 192.168.1.4 \

--n-gpu-layers 999 \

--override-tensor "\.ffn_.*_exps\.weight=CPU" \

--flash-attn on \

--cache-type-k turbo4 \

--cache-type-v turbo2 \

-c 32768 \

--temp 0.7We were highly impressed as this model went straight to work, started corrected it's tool calls, was still going strong at 12,000 Token/s! Nice!

Adding one more Super Tool: LLMQP.

This will let your localLLM code all night. No longer do you need to sit there waiting between prompts but you can quickly and effectively use this to manage your prompts sequentially.

Conclusion

Absolutely you CAN get agentic quality local LLM's working on very very minimal house GPU parts. It comes down to the resourceful methods one wants to employ. It also was inferencing very fast at ~ 45 Tokens/s.

- This can be very powerfully useful as a 'side-hustle' LLM that can do your work for minimum effort!

- Using our Code Drop tool after it was done it successfully had created the following code package for us.