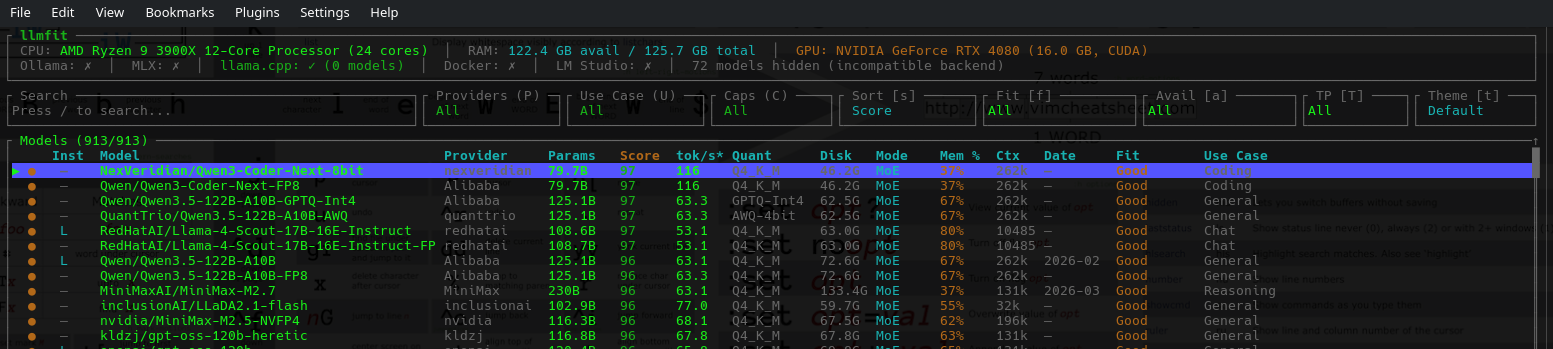

llmfit - Fast LLM Metric Fitter and Pulling Tool

We have a look at a fast fitting tool for comparing our hardware to the LLM market.

Very nice. Instead of wondering if your system can handle a model - just use this!

Let's compile it from source!

This is built in rust, so you need cargo! (And your build-essential naturally)

sudo apt install cargo git cmake gccOnce you have done that you simply pull the repository:

git clone https://github.com/AlexsJones/llmfit.gitBecause this is Rust you use cargo to build it:

cargo build --releaseThe output files will sit at target/release/

- We copied lib* and llm* to /usr/bin/

sudo cp lib* /usr/bin/

sudo cp llm* /usr/bin/Running it!

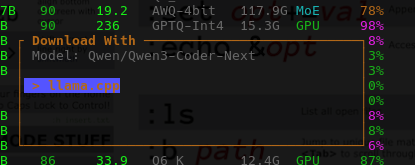

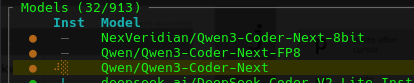

llmfitIt automatically lists all models that may fit your system, and the estimated number of tokens/s you may expect to obtain if you tried to local LLM them.

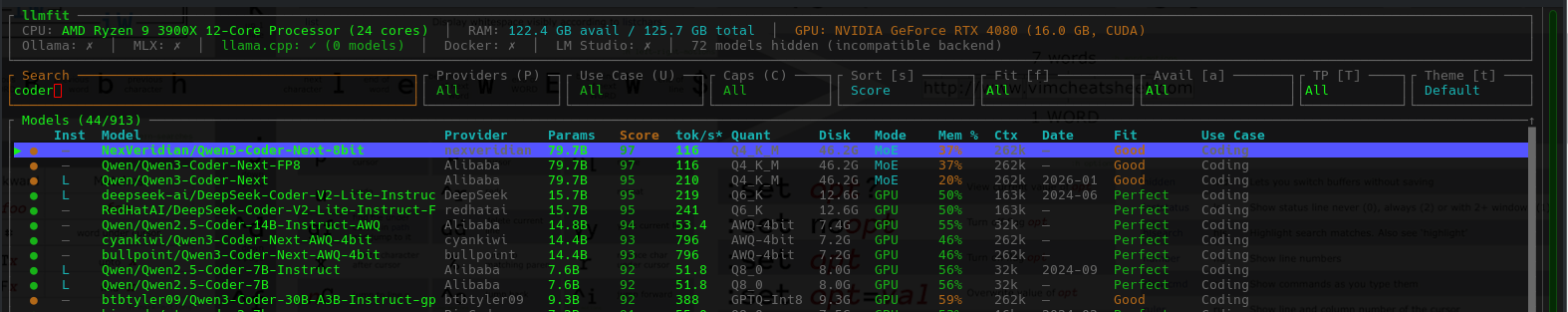

Selecting '/' and typing 'coder' it automatically shows a filter. I do believe this is pulling straight from huggingface.

Automatic Downloads,

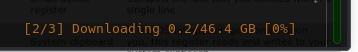

You can literally hit 'd' to Download a model if you think it is what you want / good.

In the bottom right it will show the model:

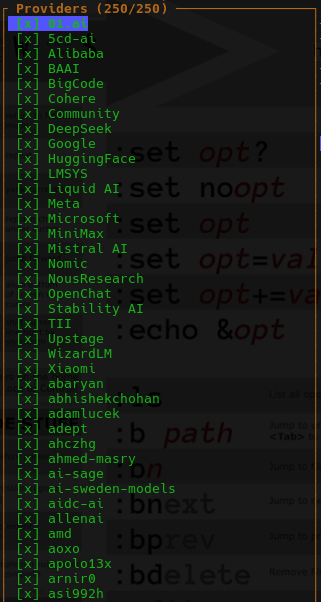

If you hit 'P' for providers it will automatically make a list of inference providers if you want to use a cloud LLM - Nice!

Nice details. Real details was put into this text-based app, as the one model is downloading you can see the spinning progression.

Simulate

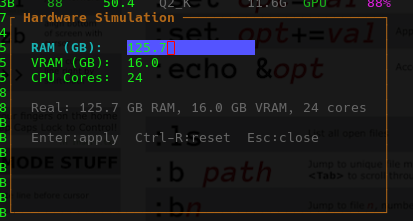

- You can simulate what you might expect to see when it runs (S)

Summary

- This is very good for saving your time navigating a pile of pages at huggingface.com - trying to see if they might run, and what to expect from them. Tap a button and it's pulling the latest model for you!

- If you are someone who works with downloading and running LLM's on a daily basis, or benchmarks them, this tool is really handy. So.